DMAIC and DFSS Methodologies

Why Six Sigma Is Not a Quick Fix (and Why Methodology Choice Matters)

In many organizations, improvement initiatives begin with urgency: missed SLAs, customer complaints, quality issues, rising costs, or delivery delays. The instinct is to “fix fast.” But Six Sigma is not a quick-fix toolkit—it is a disciplined, data-driven way to solve complex problems and build sustainable performance.

One of the biggest reasons Six Sigma programs fail to deliver business value is using the wrong methodology for the problem. Teams try to repair broken performance with ad-hoc actions, or they apply DMAIC to brand-new processes that haven’t stabilized yet. The result? Slow progress, low stakeholder confidence, and improvements that don’t sustain.

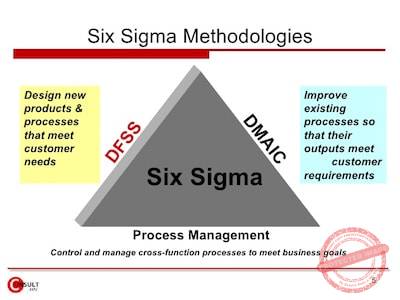

Six Sigma offers two powerful, purpose-built methodologies:

- DMAIC – Improve existing products and processes

- DFSS (DMADV) – Design new products and processes right the first time

Choosing the right methodology is the difference between temporary fixes and repeatable excellence.

What Is Six Sigma, Really?

Six Sigma is a structured problem-solving and design framework that focuses on reducing variation, preventing defects, and delivering customer value using data and statistical thinking. Organizations invest in Six Sigma not to produce reports—but to achieve outcomes:

Six Sigma works when it is applied strategically, not mechanically. That strategy begins with choosing DMAIC or DFSS based on whether the problem exists in an existing process or in a new design.

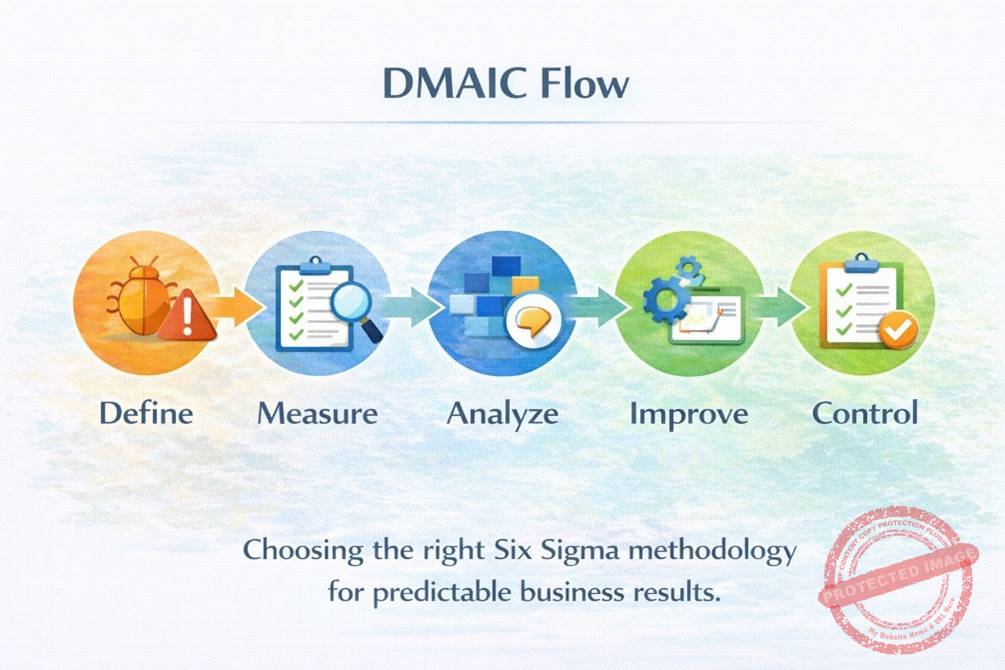

DMAIC Explained: Improve What Already Exists

DMAIC stands for Define, Measure, Analyze, Improve, Control. It is used to stabilize and improve existing processes or products that show inconsistent performance, high variation, or recurring defects.

When to Use DMAIC

Use DMAIC when:

- The process already exists and has historical data

- Performance is inconsistent or below target

- Root causes are unclear

- Rework and firefighting are frequent

- Customers complain about delays, errors, or reliability

The Define phase anchors the project in customer value and business impact. Teams capture the Voice of the Customer (VOC), translate pain points into measurable CTQs (e.g., % on-time delivery, TAT adherence, defect rate), and formalize scope and governance.

Key outputs:

- Clear problem statement (metric + timeframe)

- SMART goal statement

- Business case (why this matters)

- Scope (in-scope / out-of-scope)

- Project charter and approvals

- High-level process map (SIPOC)

Measure: Build a Reliable Baseline

You can’t improve what you can’t measure—accurately. In Measure, teams define operational definitions, validate the measurement system, collect baseline data, and compute current capability (e.g., sigma level).

Key outputs:

- Operational definitions

- Measurement System Analysis (MSA)

- Data collection plan

- Baseline performance and capability

Analyze: Find the Real Root Causes (Not Opinions)

Analyze converts hypotheses into statistically validated root causes. Teams use Pareto, stratification, hypothesis testing, correlation/regression to isolate the few causes that drive most variation.

Key outputs:

- Shortlist of statistically validated root causes

- Evidence linking causes to the CTQ

Improve: Fix What Matters and Prove It Works

Improve designs targeted solutions for validated causes, pilots changes, assesses risks using FMEA, and confirms gains with before–after comparisons.

Key outputs:

- Solution design aligned to root causes

- FMEA with mitigation actions

- Pilot results with statistical validation

- Quantified benefits (tangible & intangible)

- Rollout plan

Control: Lock In the Gains

Control ensures improvements don’t fade. Teams institutionalize changes through SOPs, training, SPC/control charts, and response plans.

Key outputs:

- Control plan

- Updated SOPs/work instructions

- SPC charts and monitoring cadence

- Handover to process owner

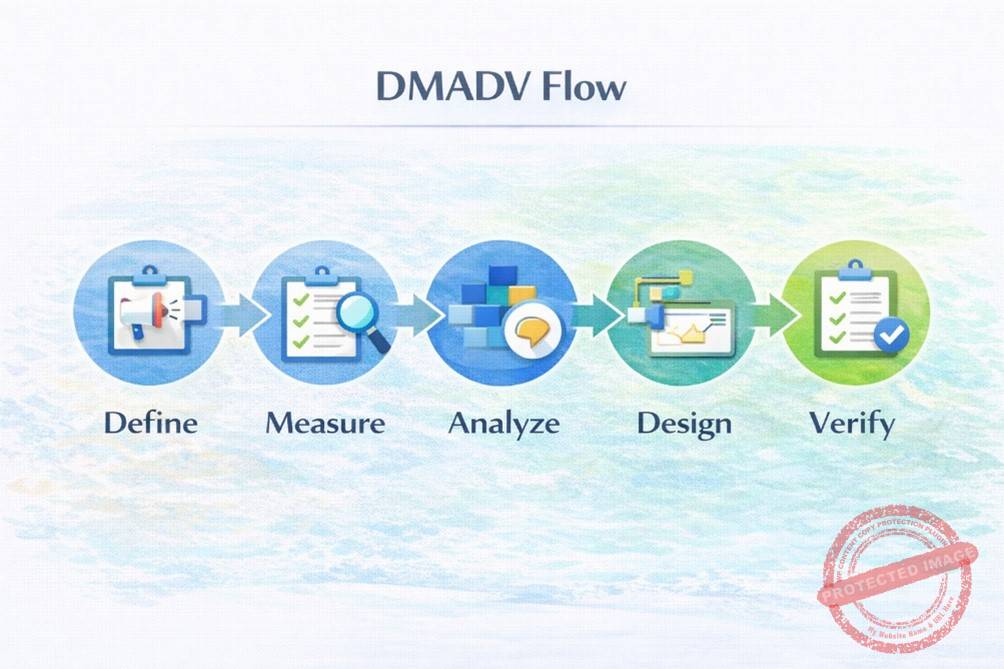

DFSS (DMADV) Explained: Design It Right the First Time

DFSS—often executed as DMADV (Define, Measure, Analyze, Design, Verify)—is used when you are creating new products, services, or processes, or when existing designs fundamentally cannot meet customer needs.

When to Use DFSS (DMADV)

Use DMADV when:

- Launching a new product or service

- Designing a new digital workflow or platform

- Building a new operating model

- Current design cannot meet customer requirements

- Rework costs are high and prevention is cheaper

Define: Translate Customer Needs into Design Objectives

Define clarifies the design gap and aligns objectives with customer requirements and strategy.

Key outputs:

- VOC translated into CTQs

- Design objectives aligned to strategy

- High-level design scope and success criteria

Measure: Identify Critical Characteristics and Risks

Measure identifies the characteristics that must be designed to meet CTQs, assesses baseline capability (if any reference exists), and identifies design risks early.

Key outputs:

- Critical-to-quality characteristics

- Risk register for design

- Measurement approach for verification

Analyze: Compare Alternative Designs

Analyze explores multiple design concepts and evaluates trade-offs (cost, risk, feasibility, performance). The goal is to choose the best design, not the most familiar one.

Key outputs:

- Design alternatives

- Pros/cons and risk assessment

- Selected concept with rationale

Design: Build the Best Solution

Design converts the chosen concept into detailed process/product designs, standards, and specifications.

Key outputs:

- Detailed design

- Process flows, SOP drafts

- Built-in quality and error-proofing

Verify: Pilot, Validate, and Launch

Verify pilots the design, validates performance against CTQs, documents standards, and transitions ownership to operations.

Key outputs:

- Pilot results vs CTQs

- Verification plan and evidence

- Final SOPs and training

- Handover to process owner

DMAIC vs DFSS (DMADV): Clear Comparison

Dimension | DMAIC | DFSS (DMADV) |

Primary Use | Improve existing processes | Design new products/processes |

Trigger | Performance gaps, variation | New design or unfit legacy design |

Data | Historical baseline available | Design assumptions + pilots |

Focus | Root cause elimination | Built-in quality from design |

Risk Profile | Fix defects after they occur | Prevent defects before launch |

Typical Outcomes | Stabilized KPIs, cost savings | Faster launches, fewer defects |

Real-World Use Cases (Across Industries)

Industry | DMAIC – Improve Existing Process | DFSS (DMADV) – Design New Process/Product |

Manufacturing | Reduce scrap on an existing production line | Design a new assembly process for a new product |

IT & ITES | Reduce ticket turnaround time (TAT) and rework | Design a new onboarding workflow for a new service |

Banking / NBFC | Reduce loan processing errors | Design a new digital KYC journey |

Healthcare | Reduce patient wait time | Design a new triage process |

Common Mistakes to Avoid

- Using DMAIC for brand-new processes (no stable baseline)

- Jumping to solutions before root cause validation

- Treating DFSS as documentation-heavy design

- Ignoring pilot validation

- Skipping control plans and sustainability

Final Takeaway: Choose the Methodology That Matches the Problem

- Fix what exists → DMAIC

- Build what’s new → DFSS (DMADV)

When organizations match the methodology to the problem context, Six Sigma becomes a repeatable engine for business performance—not a one-time initiative.

#DMAIC #DFSS #LeanSixSigma #SixSigma #ProcessImprovement #DesignForSixSigma #QualityManagement #ContinuousImprovement #OperationalExcellence #BusinessExcellence #DMADV #ProcessOptimization #DataDriven #QualityImprovement #LeanManagement #PerformanceImprovement #ProjectManagement #RootCauseAnalysis #StatisticalAnalysis #Innovation